For CIOs preparing the enterprise for AI deployment at scale, without the consulting bill, the compliance gaps, or the year-long timelines.

Key takeaways

What is enterprise AI readiness?

Enterprise AI readiness is the state in which an organisation's workflows, governance policies, and data schema are mapped, classified, and structured well enough for AI agents to operate safely and produce auditable outcomes. It is the prerequisite for deploying AI in regulated or mission-critical environments, and it is where most enterprise AI programs quietly stall.

The problem is not the AI models. Models are increasingly commoditised. The problem is that the enterprise around the model is not in a state the model can act on. Workflows live in tribal knowledge, governance lives in PDFs nobody reads, and data schema varies by department. Until those three are mapped, an AI agent has nothing reliable to operate against.

The Agento 13-stage framework is a structured approach to closing that gap. Here's the short video walkthrough, followed by the full breakdown:

Why the consulting model is breaking down

For two decades, enterprise software readiness has been delivered as a consulting engagement. A team arrives, runs workshops, produces a statement of work, configures the system, and leaves. The pattern works, slowly, expensively, and with results that depend heavily on which consultants happened to be assigned.

This pattern fails for AI for three specific reasons. First, AI deployments require continuous re-mapping as workflows evolve, and consulting engagements are point-in-time. Second, AI governance must be machine-readable to be enforceable, which a Word document cannot be. Third, AI agents need data schema that is classified by sensitivity and risk tier, which most enterprises have never formally produced.

The shift from consulting to code is the recognition that the readiness work itself needs to be a product, not a service.

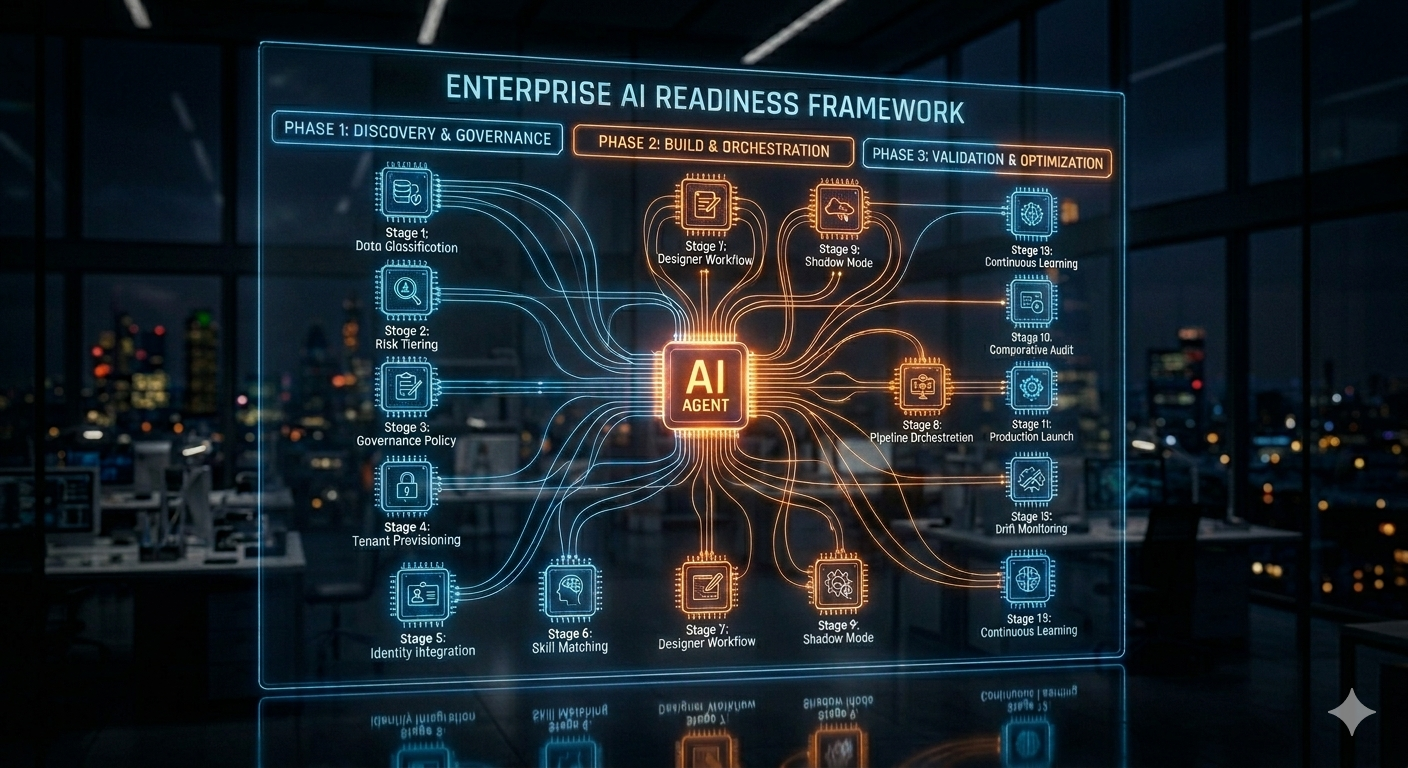

The 13-Stage Enterprise AI Readiness Framework

The framework groups 13 stages into three sequential phases. Each phase produces auditable execution artefacts that the next phase consumes.

Phase 1: Discovery & Governance (Stages 0–3)

The first phase establishes the compliant foundation everything else stands on. It automates three things: tenant provisioning, data classification, and risk tiering. By doing this work in code at the start, the framework avoids the most common failure mode of enterprise AI, which is discovering halfway through implementation that a data set is regulated and the architecture must be redone. CIOs who have lived through a GDPR or HIPAA remediation cycle will recognise the value here.

Phase 2: Build & Orchestration (Stages 4–8)

The second phase connects systems and composes workflows. Wizards handle the integration plumbing such as identity providers, source systems, and data stores. A Skill Matching Agent and a Designer compose the actual workflows the customer will run. This is the difference between handing the enterprise a toolkit and handing them a finished pipeline that already understands what they're trying to build.

Phase 3: Validation & Optimization (Stages 9–12)

The third phase is the one most consulting-led rollouts skip, and it is where most of them fail in the first year. Before anything goes live, the framework runs a Shadow Mode in which AI agents operate alongside the existing process without taking action. Outputs are compared, trust is built, and only then does the system get authority to act. Post-launch, the same instrumentation monitors for drift and feeds a continuous learning loop.

SME vs Enterprise Track: Which path applies?

Not every organisation needs the same depth of process. The framework supports two tracks that share infrastructure but differ in pace and rigour.

| Dimension | SME Track | Enterprise Track |

|---|---|---|

| Target timeline | 5–10 business days | 6–12 weeks |

| Compliance depth | Standard controls | Deep, multi-region, formal change management |

| Workflow complexity | Single-domain, low integration count | Cross-domain, many integrations, audit-grade documentation |

| Human review | Reserved for genuine decisions | Embedded at every stage gate |

| Security standards | Identical Execution Artefacts and Agent Shield | Identical Execution Artefacts and Agent Shield |

The unified security row is the most important one. An SME on the fast track does not get a watered-down security posture in exchange for speed. They get the same auditable artefacts and the same Agent Shield protection an enterprise gets, applied to a smaller scope. This matters for CIOs who acquire smaller business units and need to fold them into enterprise compliance without re-onboarding.

How does the framework cut AI onboarding cycle time by 50%?

The 50% cycle-time reduction comes from automating two specific bottlenecks. The first is discovery, historically weeks of stakeholder interviews, workflow mapping sessions, and documentation. The second is operator bootstrapping, which historically requires shadowing an experienced consultant for the first deployment cycle. Both are work that was never genuinely human-judgement-dependent, and both compress dramatically when handed to code.

It is worth being precise about what the 50% target is not. It is not a promise to skip compliance steps. It is not achieved by parallelising work that genuinely needs to be sequential. It is achieved by removing the human bottleneck from work where the human was a transcription layer, not a decision layer.

The capabilities roadmap: V1, V2, V3

The framework ships in three deliberate waves. CIOs evaluating the platform should understand which capabilities exist today and which arrive on what horizon.

V1: Core workflow

The first version delivers tenant provisioning and Shadow Runner v1. A customer can be onboarded, workflows can run in shadow, and the artefacts produced are good enough to audit. This is the floor. Everything works end to end.

V2: Scale and speed

The second wave adds event-log mining and synthetic data generation. Event-log mining lets the platform learn how an enterprise actually works by observing existing systems, rather than relying entirely on interviews. Synthetic data generation lets the platform stress-test workflows before they touch real data. Together, these move the framework from automated checklist to informed automation.

V3: Self-tuning

The third wave is where the framework starts improving itself. Proactive policy updates push compliance and configuration changes to deployed tenants without re-onboarding. An offline learning loop takes signals from production and feeds them back into the framework's defaults, so each new deployment benefits from every previous one. This is the long-term payoff: a readiness system that gets better with every customer instead of degrading.

What this changes for the CIO

The deeper shift is not about onboarding speed. It is about what enterprise AI readiness is. When the playbook lives in code, three things change at once that matter at the CIO level.

First, the work becomes inspectable. Anyone (internal audit, external regulators, the board) can look at the framework and see exactly what gets configured, what gets validated, and what produces an artefact. That is a different conversation from defending a 200-page statement of work nobody read.

Second, the work becomes improvable. A bug in stage 7 gets fixed once, for everyone, instead of being rediscovered by every new consulting engagement. Improvements compound across the portfolio.

Third, the work becomes accountable. The execution artefacts produced at each stage stand on their own, without depending on someone's memory of what was decided in a workshop. For CIOs operating under SOX, GDPR, HIPAA, or any of the new AI-specific regimes coming online, that auditability is the actual product.

Closing thought

Enterprise AI readiness is the part of the AI journey where promises get tested against reality. A framework that turns that test into a repeatable, observable, improvable process is doing something more interesting than saving time. It is making the work itself visible. The 50% cycle-time number is the headline. The auditable, self-tuning system underneath it is the actual product CIOs are buying.